Over the past month, the scholarly world has been cranking out new insights — some profound, some obscure, and some useful for newsrooms and media producers of all kinds. Meanwhile, Nicholas Kristof has kicked off yet another round of debate about whether academics are engaged enough (see Ezra Klein for the latest salvo, on gated academic journals and their consequences).

Amid all that, there are indeed some good knowledge nuggets coming from the halls of academe. Recent themes: Know thy network. Beware the rise of journo bots. And milk those Twitter users for cash. More on those below, where you’ll find a sampling of recent papers and their findings.

(Note: This article was first posted at Nieman Journalism Lab, as part of an ongoing collaboration.)

________

“Mapping Twitter Topic Networks: From Polarized Crowds to Community Clusters”

From the Pew Research Internet Project. By Marc A. Smith, Lee Rainie, Ben Schneiderman and Itai Himelboim.

This important new study, done in collaboration with academic researchers Schneiderman (University of Georgia) and Himelboim (University of Maryland), goes a long way toward making social network analysis and theory intelligible to the general public. In a clean, straightforward way, it lays out the six basic “archetypes” of Twitter conversation, giving precise language to phenomena many of us observe at only an intuitive level (and yet which researchers have observed for some time).

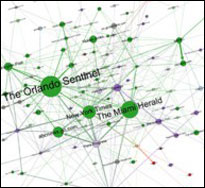

Having analyzed millions of tweets, the researchers conclude that political discussions often show “polarized crowd” characteristics, whereby a liberal and conservative cluster are talking past one another on the same subject, largely relying on different information sources. Of course, you still see old “hub and spoke” dynamics, or “broadcast networks,” where mainstream media are still doing the agenda-setting. But there are novel networks, too: “Support” networks that form around customer complaints, which looks like “hub and spoke” but also involves more two-way conservation; “tight crowds” involving niche interests, hobbies and professional groups; “brand clusters” around topics of mass interest (celebrities, for example) that primarily feature “isolates,” or people talking about the same subject but not to one another; and “community clusters” that “look like bazaars with multiple centers of activity” and which “can illustrate diverse angles on a subject based on its relevance to different audiences, revealing a diversity of opinion and perspective on a social media topic.”

Related: A new study in the Journal of Communication, “Social Media, Network Heterogeneity, and Opinion Polarization,” by Jae Kook Lee, Jihyang Choi, and Cheonsoo Kim of Indiana University and Yonghwan Kim of the University of Alabama, demonstrates the importance of news-related activities on social networks. Getting news, posting news and talking about politics on Twitter and Facebook seem to be associated with having a more diverse social network. Overall, the “role played by social media in the realm of public opinion is not simply optimistic or pessimistic,” the researchers conclude.

“The Battle for ‘Trayvon Martin’: Mapping a Media Controversy Online and Off-line”

From the MIT Center for Civic Media, published in First Monday. By Erhardt Graeff, Matt Stempeck, and Ethan Zuckerman.

The Nieman Journalism Lab’s Caroline O’Donovan has published a wonderful explainer on this study — worth checking out if you missed it. The study represents an ambitious effort to map public discourse around a national news topic — its ebb and flow, its catalysts, magnifiers and gatekeepers alike. How exactly do stories move across the wide array of information channels we use? The researchers conclude: “Our analysis finds that gatekeeping power is still deeply rooted in broadcast media…. Without the initial coverage on newswires and television, it is unclear that online communities would have known about the Trayvon Martin case and been able to mobilize around it.” Effective public relations by parties involved saved the story from vanishing initially, and social media jumped on the bandwagon only later.

Graeff, Stempeck, and Zuckerman contribute important insights into the networked ecosystem of communication and news. The paper is a direct follow-on to an earlier paper by Internet theorist Yochai Benkler and others that suggested new network dynamics at work around the Stop Online Piracy Act (SOPA/PIPA) and related online activism. Both papers leverage the underappreciated Media Cloud project, which is finally getting its due. Graeff, Stempeck and Zuckerman basically show a kind of counter-example to the Benkler findings. This scholarly back-and-forth is well worth paying close attention to, as MIT and Harvard’s Berkman Center have more papers in the pipeline along these lines. If we are to answer the ultimate digital media question — “How much has the Internet truly changed communication?” — this research will be a vital resource in providing the data.

“Enter the Robot Journalist: Users’ Perceptions of Automated Content”

From Karstad University (Sweden), published in Journalism Practice. By Christer Clerwall.

The study sets out to see how, among a small sample of undergraduates, people might judge differences between news content written by human journalists and by computers. The sample articles focused around National Football League topics. The subjects were basically unable to tell the difference between the two articles, and indeed on average found the computer-generated article more credible. Clerwall concludes: “Perhaps the most interesting result in the study is that there are no (with one exception) significant differences in how the two texts are perceived by the respondents. The lack of difference may be seen as an indicator that the software is doing a good job, or it may indicate that the journalist is doing a poor job — or perhaps both are doing a good (or poor) job?” He asks a provocative and, for many in the media industry, scary question: “If journalistic content produced by a piece of software is not (or is barely) discernible from content produced by a journalist, and/or if it is just a bit more boring and less pleasant to read, then why should news organizations allocate resources to human writers?”

“Inferring the Origin Locations of Tweets with Quantitative Confidence”

From Los Alamos National Laboratory and Illinois Institute of Technology, presented at ACM’s February 2014 Computer Supported Cooperative Work conference. By Reid Priedhorsky, Aron Culotta, and Sara Y. Del Valle.

This paper demonstrates that, although only a tiny fraction of people enable geolocation on their tweets, it is algorithmically possible to figure out where you are tweeting from, working from just proximal cues (particularly the mention of toponyms, or placenames.) The researchers analyze 13 million tweets and figure out the basic thresholds they need to infer location. Priedhorsky, Culotta, and Del Valle note that the findings have implications for privacy: “In particular, they suggest that social Internet users wishing to maximize their location privacy should (a) mention toponyms only at state- or country-scale, or perhaps not at all, (b) not use languages with a small geographic footprint, and, for maximal privacy, (c) mention decoy locations. However, if widely adopted, these measures will reduce the utility of Twitter and other social systems for public-good uses such as disease surveillance and response.”

Related: Also see other interesting ACM conference papers such as “The Language that Gets People to Give: Phrases that Predict Success on Kickstarter” and “Designing for the Deluge: Understanding & Supporting the Distributed, Collaborative Work of Crisis Vounteers. (A special thanks and hat tip to Meredith Ringel Morris of Microsoft Research, who co-chaired the papers committee for the conference.)

“An Empirical Study of Factors that Influence the Willingness to Pay for Online News”

From Universidad Carlos III de Madrid, Spain, published in Journalism Practice. By Manuel Goyanes.

Goyanes analyzes a random sample of 570 survey interviews done by the Pew Research Center to see how demographics and media use relate to paying for news and other online goods and services. Younger people, and those with incomes above $75,000, were more willing to pay for online news. Twitter users showed an increased willingness to pay. Goyanes states that “news organizations [should] consider Twitter not only a mechanism to distribute breaking news quickly and concisely, but also a marketing and interactive platform with which they can convince new customers to pay for their content through innovative marketing and advertising campaigns.”

“Facebook ‘Friends’: Effects of Social Networking Site Intensity, Social Capital Affinity and Flow on Reported Knowledge-gain”: From San Diego State University, published in The Journal of Social Media in Society. By Valerie Barker, David M. Dozier, Amy Schmitz Weiss, and Diane L. Borden.

The study adds to the growing and voluminous literature on the human motivations behind activity on social media. The researchers set out to assess what makes people learn things on Facebook, and under what conditions they are more likely to acquire knowledge. Which quality is most important? As you might expect, it’s the desire to connect. The study analyzes a subset of data (236 persons) from telephone surveys with Internet users conducted in 2012. Barker, Dozier, Schmitz Weiss and Borden conclude that it is not the intensity of participation in social networking sites that is the crucial factor in driving them to pick up knowledge. What matters most, it turns out, is a “sense of community and likeness felt for weak ties online” — social capital affinity — in terms of acquiring knowledge both through focused tasks and incidentally.

Keywords: Twitter, Facebook, news, social media

Expert Commentary